🎛️ Why Live Matters

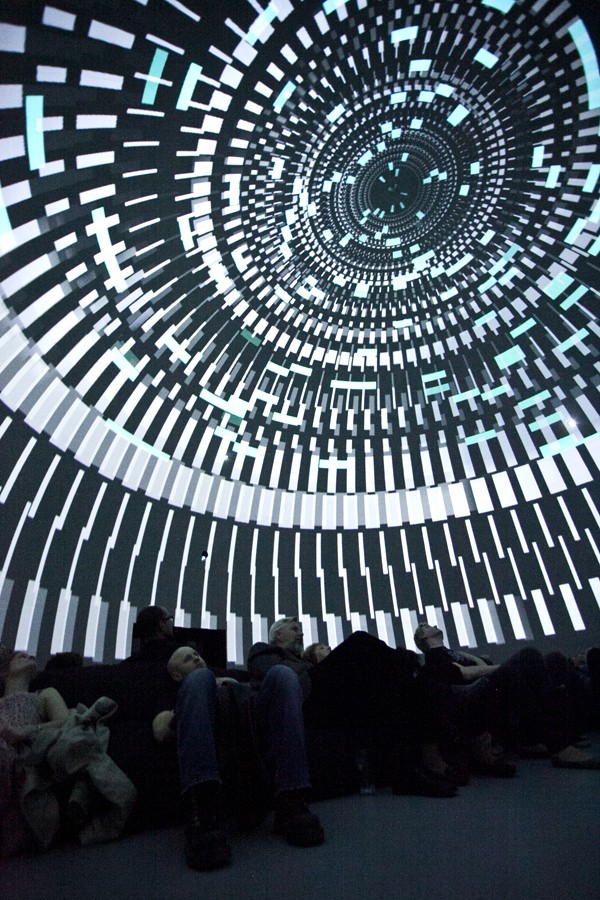

Most fulldome content is pre-rendered — painstakingly crafted frame by frame, then played back on dome projection systems. But live performance flips that model entirely. Instead of pressing play, an artist generates visuals and sound in real time, responding to the audience, the music, and the moment.

This isn't new. The SAT (Société des arts technologiques) in Montréal has been hosting live performances in its Satosphère since 2011. Planetariums in Berlin and Hamburg regularly program concerts under the dome. And a new generation of VJ tools — led by TouchDesigner and Resolume — has made it possible for a single laptop to drive an entire dome in real time.

The result is a fundamentally different art form: one where the dome becomes an instrument, not a screen.

🔗 How It Works: Signal Flow

A live dome performance requires a chain of software and hardware to get real-time visuals from an artist's laptop onto the curved surface of a dome. Here's the typical signal flow:

Key Protocols

- Syphon (macOS) / Spout (Windows) — GPU-level frame sharing between applications on the same machine. Zero-copy, near-zero latency. This is how a VJ tool sends its output to a dome mapping application.

- NDI (NewTek) — network-based video streaming protocol. Higher latency than Syphon/Spout but works across machines. Used when content generation and dome mapping run on separate computers.

- OSC (Open Sound Control) — network protocol for sending control messages between software. Used to sync visual parameters with audio, trigger cues, or connect sensors.

- MIDI — hardware controller protocol. Faders, knobs, buttons → software parameters. Still the most common way to physically control a live dome show.

- DMX — lighting control protocol. Used when dome performances include stage lighting, LED fixtures, or other show elements beyond projection.

Latency Considerations

For live performance, latency is everything. The chain from artist input to dome projection needs to feel instantaneous. Syphon/Spout achieve this with shared GPU textures (sub-millisecond). NDI adds 1–3 frames of latency. The projectors themselves add 1–2 frames depending on processing. Total glass-to-glass latency for a well-configured dome: 30–80ms — acceptable for VJing, tight for live musical synchronization.

🖥️ Software Tools for Live Dome Performance

The toolchain for live dome work has matured significantly. Here are the key applications, from dedicated dome tools to creative coding environments.

TouchDesigner

Win · macOSThe dominant tool for live dome visuals. Node-based visual programming environment by Derivative. Renders domemaster output natively, supports Syphon/Spout/NDI, and handles everything from generative geometry to audio-reactive particle systems. Used at SAT Montréal, Zeiss-Großplanetarium Berlin, and by most professional dome VJs. Free non-commercial license available.

Resolume Arena

Win · macOSIndustry-standard VJ software and media server. Arena (the full version) includes projection mapping, multi-projector output, edge blending, and fisheye/dome output modes. Supports Syphon, Spout, and NDI input/output. Powerful MIDI/OSC mapping and audio analysis (FFT). DMX control from lighting desks. €799 for Arena.

VDMX6

macOS onlyFully customizable real-time video mixing application by Vidvox. Hardware-accelerated layer-based rendering with Syphon and NDI support. Powerful audio analysis and BPM detection for syncing visuals with live music. Accepts TouchDesigner, Quartz Composer, and ISF/GLSL as live sources. Deeply extensible via MIDI, OSC, DMX, and HID control.

Blendy Dome VJ

macOSPurpose-built fulldome mapping and slicing software by Pedro Zaz / United VJs and StudioAvante. Takes a domemaster input via Syphon and slices it for up to 6 projectors with automatic edge blending. Simulates dome projection for pre-visualization. The missing link between VJ software and actual dome projection hardware. Optimized for HAP codec.

MadMapper

Win · macOSProjection mapping software with support for dome and curved surface warping. Accepts Syphon/NDI input and can map content onto complex geometries. Strong lighting integration via DMX/Art-Net. Often used alongside other VJ tools as the final mapping stage before projectors.

Notch

WindowsNext-generation GPU-accelerated real-time graphics engine. Four physically-based renderers, node-graph workflow, and tight integration with media servers. Used heavily in concert touring (Dua Lipa, major festivals). Can output to dome configurations via Spout/NDI. Windows only, professional pricing.

Digistar 7

Venue SystemEvans & Sutherland's planetarium platform — found in hundreds of domes worldwide. Supports live presenter control via iPad, real-time universe navigation, Domecasting (live multi-venue collaboration), and Unreal Engine integration with alpha transparency. Not a VJ tool, but the dominant platform for live-presented planetarium shows.

Processing / openFrameworks

Cross-platformCreative coding frameworks for artists. Processing (Java-based) and openFrameworks (C++) both support fisheye/equirectangular rendering and Syphon/Spout output. Lower-level than TouchDesigner but offer complete creative freedom. Used by artists who want to code their visuals from scratch. Both free and open source.

Max/MSP + Jitter

Win · macOSCycling '74's visual programming environment for audio, video, and interactive media. Jitter handles real-time 3D graphics and video processing with OpenGL. Syphon output support. Particularly strong for audio-visual works where sound and image are tightly coupled. Ableton Live integration via Max for Live.

Hydra

BrowserBrowser-based live coding environment for video synthesis by Olivia Jack. Write code → see visuals instantly. No installation needed. Outputs via browser window (capture with Syphon Virtual Server or OBS). Popular in the algorave and live coding communities. Completely free and open source.

TidalCycles

Cross-platformLive coding environment for algorithmic music patterns, written in Haskell. Uses SuperCollider for synthesis and MIDI output. The engine behind most algorave performances. Paired with visual live coding tools (Hydra, Punctual), it enables fully code-driven audio-visual dome shows. Free and open source.

Sonic Pi

Cross-platformThe live coding music synth for everyone. Code-based music creation with an emphasis on accessibility and education. Outputs via SuperCollider. Can send OSC messages to visual software for audio-visual synchronization. Used in educational dome performances and live coding workshops. Free and open source.

Unreal Engine

Win · macOS · LinuxEpic Games' real-time 3D engine. nDisplay system enables multi-projector output. Off World Live plugin (mentioned in DFW forums) provides fulldome camera rigs. Digistar 7 integrates Unreal Engine content with alpha transparency for blending with planetarium data. Massive asset library. Free for non-commercial use.

Unity

Cross-platformCross-platform game engine with community-built fulldome camera rigs. Can render domemaster output in real time using cubemap-to-fisheye shaders. Spout/Syphon plugins available for frame sharing. Lower barrier to entry than Unreal for simple interactive dome experiences.

🎯 Deep Dive: Choosing Your Approach

The tool grid above gives you the landscape. Now let's go deeper into how each approach actually works for live dome performance — what it feels like to build with, where it excels, and where it falls short.

TouchDesigner

If there's one tool that the live dome community has converged on, it's TouchDesigner by Derivative. It has a native Fisheye Camera component that does exactly what you need, its node-based visual programming is fast to iterate, and its CHOP (Channel Operator) system handles audio analysis with genuinely no friction.

Plug in a microphone, drop an Audio Spectrum CHOP, and route the FFT values directly to a shader parameter. Done. Your visuals react to sound in ten minutes. The fisheye camera uses an equidistant projection by default — correct for most planetariums. The companion Kantan Mapper palette component handles multi-projector setups with soft-edge blending. Performance at 4K is achievable on an RTX 3080 or better — expect 30–60fps.

Weakness: Not primarily an audio synthesis tool. For spatial dome audio, you'll bridge out via OSC to SuperCollider.

SuperCollider + ATK

SuperCollider is the other anchor of live dome work — not for visuals, but for sound. The Ambisonic Toolkit (ATK) for SuperCollider is a research-grade HOA implementation. It handles First and Higher Order Ambisonics — encoders that place sound in 3D space, decoders that render to your dome's speaker array, transformers that rotate and zoom the soundfield in real-time.

What you get is a live-coded ambisonics engine. Write a Synth, place it at coordinates, move it. Use OSCFunc to receive position data from a visual system. It's expressive, low-latency (2–20ms), and free.

The classic combo: SC audio + TD visuals, linked by OSC — the most common serious live dome workflow.

Unreal Engine & Unity

The game engine approach offers photorealistic real-time 3D with physically-based rendering. If your dome show involves virtual environments or anything that needs to look like a real place, this is the path. Unreal's nDisplay drives multi-projector dome rigs. MetaSounds handles generative audio; Wwise covers spatial audio with HOA output.

Unity is simpler to set up, with good spatial audio via FMOD or Resonance Audio. Paul Bourke's Unity fisheye scripts are freely available.

Caveat: Neither engine is designed for the live performance mental model. Adding live control means adding layers.

Max/MSP + IRCAM Spat~

For composers in the electroacoustic tradition, Max/MSP has been the tool since the 1990s. Where it excels in dome contexts is spatial audio: IRCAM Spat~ 5 externals are the gold standard for object-based spatialization. ICST Ambisonics externals (Zurich University of the Arts, also free) handle pure HOA. Max for Live integration with Ableton adds composerly control.

Resolume Arena

Resolume Arena is how many live dome shows actually happen — a skilled VJ mixing pre-produced fisheye content in real-time. Build a library of domemaster clips (4K circular video, DXV codec), set up output mapping, and perform by mixing. Layers, effects, crossfades. Audio reactivity via FFT analysis, BPM detection, Ableton Link sync.

Live Coding in the Dome

Hydra (by Olivia Jack) generates WebGL visuals in real-time from code: osc(20, 0.1, 0.8).rotate(0.5).out(). Capture output via Spout/Syphon and pipe into TD or Resolume for fisheye reprojection. TidalCycles handles algorithmic sound patterns via SuperCollider with ATK for ambisonics. Together: a fully live-coded immersive show.

Python-Based Approaches

TouchDesigner itself is scriptable in Python. Beyond TD, custom pipelines using ModernGL, Pyglet, or vispy give complete rendering control. For audio, pyo handles real-time synthesis; python-osc controls external synths. Total flexibility: if you can code it, you can render it.

📊 At a Glance

| Tool | Dome Output | Spatial Audio | Live Control | Learning Curve | Cost |

|---|---|---|---|---|---|

| TouchDesigner | Native fisheye ★★★★★ | Via OSC bridge | MIDI/OSC/Python | Medium | Free (non-comm) |

| SuperCollider | N/A (audio only) | ATK HOA ★★★★★ | Live coding | Steep | Free |

| Unreal Engine | nDisplay + plugins ★★★★ | Wwise / MetaSounds | OSC / Blueprints | Very steep | Free (rev share) |

| Unity | Custom shaders ★★★ | FMOD / Resonance | C# scripting | Steep | Free (personal) |

| Resolume Arena | Fisheye output ★★★★ | FFT analysis only | MIDI/OSC/DMX | Low | €799 |

| Max/MSP + Jitter | Custom GLSL ★★★ | Spat~ / ICST ★★★★★ | MIDI/OSC/M4L | Medium-steep | $9.99/mo |

| VVVV | Custom shaders ★★★ | Via OSC bridge | MIDI/OSC | Medium-steep | Free (non-comm) |

| Hydra | Via Syphon/Spout ★★ | N/A (visual only) | Live coding | Low | Free |

| Processing / oF | Custom shaders ★★★ | ofxMaxim / Minim | MIDI/OSC | Medium | Free |

🔗 Hybrid Workflows

The best live dome shows almost always combine tools:

TouchDesigner → dome visuals (fisheye camera, Kantan Mapper)

SuperCollider → dome audio (ATK ambisonics)

Connected via OSC: SC sends audio analysis; TD sends visual state

Ableton Live → musical material (clips, synthesis, live instruments)

TouchDesigner → dome visuals (Ableton Link for BPM sync)

IEM Plugin Suite in Reaper → HOA ambisonics decoding

Hydra → visuals (captured via Spout/NDI)

TidalCycles + SC + ATK → spatial audio

TouchDesigner → receives visual feed, applies fisheye, outputs to dome

Spout (Windows) and Syphon (macOS) enable zero-latency GPU texture sharing between applications. NDI extends this over the network. OSC is the universal control layer — any tool that speaks OSC can talk to any other.

🔊 Spatial Audio: Don't Ignore It

The standard: Higher Order Ambisonics (HOA). Encode your soundfield at 3rd order or higher (16+ channels), then decode to your venue's speaker layout. The IEM Plugin Suite (free, from the Institute of Electronic Music and Acoustics in Graz) is the practical entry point. For more sophisticated work: IRCAM Spat~ 5 in Max/MSP handles irregular speaker arrays with object-based spatialization.

Minimum viable dome audio: a stereo or ambisonics signal decoded to a ring of 8+ speakers. You won't get full-sphere localization, but you'll have the immersion of sound surrounding the audience.

🏛️ Venues for Live Dome Performance

Not every dome supports live performance. Most planetariums are built for playback — pre-rendered content served from a media server. These venues have made live performance a core part of their programming.

🇨🇦 SAT — Satosphère

The gold standard for live dome performance. The Satosphère at the Société des arts technologiques is the world's first permanent immersive theatre dedicated to artistic creation. Inaugurated in 2011, it runs 8 video projectors and 93 full-range speakers plus 5 sub channels. The SAT offers artist residencies, runs SAT Fest annually (next: March 24–28, 2026), and has a dedicated R&D lab. Their "Dômesicle" series presents regular live performances throughout the year. The SAT actively accepts proposals from artists through their open call.

🇩🇪 Zeiss-Großplanetarium

Berlin's major planetarium hosts a wide range of live events — from concerts and DJ nights to immersive art performances. Part of Stiftung Planetarium Berlin, it regularly programs music events under the dome, combining the planetarium's projection system with live audio. The 23-meter dome and central location make it one of Europe's most accessible venues for immersive live events.

🇩🇪 Planetarium Hamburg

One of the most technically advanced planetariums in Europe. Planetarium Hamburg has a long tradition of live music events, from classical concerts to electronic music nights. The dome's Digistar system supports real-time visual programming alongside live musical performances.

🏴 CULTVR Lab

Home of Fulldome UK (FDUK), CULTVR Lab at Cardiff University runs a flexible immersive dome that has hosted live VJ performances, interactive installations, and experimental art during the annual FDUK festival. Their portable dome setup makes it an accessible testing ground for live dome work.

🇺🇸 Fiske Planetarium

Home of Dome Fest West, Fiske Planetarium at the University of Colorado has hosted live dome performances during the annual festival, including VJ sets and experimental audio-visual works. Their Digistar system and willingness to experiment make it a key venue for the US live dome scene.

🇺🇸 Adler Planetarium

Adler Planetarium — America's first planetarium — has embraced live programming with dome shows, concerts, and immersive events. Their dome theater program includes live-presented shows and special event nights with music and visuals.

🇩🇪 ESO Supernova

ESO Supernova Planetarium & Visitor Centre, operated by the European Southern Observatory. While primarily educational, it has hosted special live events and experimental fulldome art screenings. State-of-the-art projection and sound system in a stunning architectural setting.

🇺🇸 U.S. Space & Rocket Center

Runs an exclusively live and interactive program. Every show is delivered by a live presenter controlling Digistar in real time — flying through the universe, responding to audience questions, and tailoring each show to the specific audience. They also license their live shows to other planetariums. A model for how live presenting transforms the dome experience.

🎭 Performance Formats

Live dome performance isn't one thing — it's a spectrum of approaches, from reactive VJing to fully coded algorithmic art.

VJing in the Dome

A VJ performs real-time visual mixing — blending video clips, generative effects, and live camera feeds, typically synced to music from a DJ or band. The dome version requires fisheye/domemaster output from tools like Resolume, TouchDesigner, or VDMX, mapped to the dome via Blendy Dome VJ or the venue's warping system.

Live Cinema / Live Scoring

Musicians perform a live soundtrack to fulldome visuals — either pre-rendered content or real-time generated imagery. The audio drives the visual experience, creating a unique performance each time. Common at planetarium concert nights and festivals.

Audio-Reactive Generative Art

Visuals are generated algorithmically and respond to audio input in real time. Microphone or audio feed → FFT analysis → visual parameters. Every beat, frequency, and texture of the sound shapes what appears on the dome. TouchDesigner and Processing are the primary tools.

Live Coding (Algorave)

Artists write code live on stage, projecting their screen while algorithms generate music and visuals in real time. Born from the algorave movement, this format uses TidalCycles, Hydra, Sonic Pi, and SuperCollider. The audience watches both the code and its visual output on the dome — radical transparency in art-making.

Interactive Installations

The audience influences what happens on the dome — through motion sensors, mobile phones, or physical interfaces. Dome-wide interactive experiences can turn hundreds of people into co-creators. The DFW forums documented planetariums using audience response systems and live voting to shape show content.

Concerts Under the Dome

Live music performances with dome visuals — from classical orchestras to electronic acts. The dome adds an immersive visual dimension to the concert experience. Planetarium Hamburg and Zeiss-Großplanetarium Berlin are leading venues for this format, hosting everything from ambient to techno.

Dance & Theater

Performance art inside the dome, where dancers or actors are surrounded by responsive projected environments. The SAT Satosphère has hosted dance performances where the dome visuals respond to movement tracked by sensors, creating a fusion of physical and digital performance.

DJ Sets with Dome Visuals

Electronic music DJ sets paired with reactive dome visuals. The DJ's audio output feeds into visual software via FFT analysis, creating visuals that pulse and morph with the music. SAT's Dômesicle series and planetarium "after dark" events are prime examples.

🎬 Featured Performances & Videos

Selected examples of live dome performance — from SAT Montréal's Satosphère to planetarium concerts and VJ sets. These demonstrate the range of what's possible when the dome becomes a live instrument.

The SAT's overview of the Satosphère — the 18-meter dome with 8 projectors and 93 speakers that has been the epicenter of live dome performance since 2011. Shows the venue's capabilities for immersive artistic creation.

🔗 Explore the Satosphère →Demonstration of the Blendy Dome VJ workflow — from domemaster input via Syphon to multi-projector output with automatic edge blending. Shows how a single laptop can drive live fulldome VJ performances.

🔗 See Blendy Dome VJ →Robert Henke's laser performance series, developed over 12 years with custom software that later inspired TouchDesigner's laser control tools. The final Lumière III performance was at Mapping Festival, Geneva in May 2025. While not dome-specific, Henke's approach to real-time audio-visual performance has deeply influenced the live dome community.

🔗 Robert Henke — Lumière →Excerpts from the Recombinant Festival — an event dedicated to immersive live cinema and real-time dome performance. Showcases multiple artists performing live in a dome environment.

▶ Watch on Vimeo →SAT Fest (next edition: March 24–28, 2026) brings together artists working in immersive creation for live performances, installations, and screenings in the Satosphère. The festival is one of the most important platforms for live dome art globally.

🔗 SAT Events →Resolume Arena's projection mapping capabilities applied to dome environments — including fisheye output, multi-projector blending, and real-time VJ control with MIDI, OSC, and DMX. Shows how professional VJ software handles dome geometry.

🔗 Resolume Arena →🎤 Artists to Watch

The live dome scene is still small enough that you can know the key players. These artists and collectives are pushing the form forward.

Pedro Zaz / United VJs

Creator of Blendy Dome VJ — the software that makes fulldome VJing practical. Pedro Zaz and the United VJs collective have performed live in domes worldwide and built the tools others use. Their work bridges the gap between VJ culture and fulldome infrastructure.

Robert Henke

Co-creator of Ableton Live, electronic music artist (as Monolake), and pioneering laser/light installation artist. His Lumière series inspired TouchDesigner's laser tools. Works at the intersection of custom software, generative sound, and immersive visual art. Based in Berlin.

roberthenke.com →Joanie Lemercier

Projection mapping pioneer working with light as a medium. Known for large-scale architectural projections and immersive installations. His work explores perception, geometry, and the relationship between light and space. Also prominent in climate activism through projected art.

joanielemercier.com →Nonotak Studio

Collaborative studio of Noemi Schipfer and Takami Nakamoto, creating immersive light and sound installations. Their work — including DAYDREAM, SHIRO, and HOSHI — explores the boundaries of perception through synchronized light, projection, and spatial sound. Exhibited globally.

nonotak.com →Ryoji Ikeda

Japanese artist working with data, sound, and light at massive scales. His works — test pattern, datamatics, superposition — transform raw data into overwhelming sensory experiences. His immersive installations have been shown in dome-like environments and large-scale projection spaces worldwide.

ryojiikeda.com →Carrie Berglund / LIPS

Founder of the LIPS (Live Interactive Planetarium Symposium) community and Digitalis Education Solutions. Advocates for live, interactive planetarium presentations over passive playback. Documented extensively in the DFW forums as a leader in the live presenting movement.

Ed Lantz / Vortex Immersion

Founder of Vortex Immersion Media, designing physical spaces for immersive experiences. Speaker at DFW forums on venue design for live performance. Advocates for dome venues that serve both playback and live performance needs, with flexible technical infrastructure.

Alba G. Corral

Barcelona-based visual artist and creative coder who performs live audiovisual sets with orchestras, electronic musicians, and in immersive spaces. Her work spans projection mapping (Mapping Casa Navàs, Lux Mundi), immersive dome performances (end(O)), and collaborations with symphonic orchestras. She codes her own visual instruments, creating generative organic forms that respond to live music — a bridge between classical performance, creative coding, and dome art.

albagcorral.com →Olivia Jack

Creator of Hydra, the browser-based live coding visual synthesizer used in algorave and live coding performances worldwide. Her work makes real-time visual creation accessible to anyone with a web browser, lowering the barrier to live dome visuals.

🚀 Getting Started: Your First Live Dome Show

Want to perform live in a dome? Here's a practical path from zero to dome.

Step-by-Step Guide

-

1

Learn a content tool. Start with TouchDesigner (free non-commercial license) — it has the strongest dome support and the largest community. Complete the official tutorials, then experiment with domemaster rendering using the FisheyeCHOP or cubemap-to-fisheye techniques.

-

2

Output via Syphon/Spout. Configure your content tool to send its output via Syphon (macOS) or Spout (Windows). This is the standard way to connect to dome mapping software. Test with a Syphon/Spout viewer app to confirm your output is working.

-

3

Get dome mapping software. Download Blendy Dome VJ — it includes a dome simulator so you can preview how your content looks on a curved surface without access to a real dome. Configure it to receive your Syphon/Spout output.

-

4

Add control. Connect a MIDI controller (any basic one works) and map faders/knobs to visual parameters in your content tool. This transforms your laptop into a playable instrument. Add audio reactivity via FFT analysis for music-driven visuals.

-

5

Find a dome. Contact venues that support live performance — SAT Montréal has an open call for proposals. Portable/inflatable domes at festivals (FDUK, Dome Fest West, Dome Dreaming) are often more accessible than fixed planetariums. Some maker spaces and universities have small domes you can access.

-

6

Test in the actual dome. Visuals that look good on a flat screen often don't work in a dome — the geometry is different, the brightness varies across the surface, and the audience perspective changes everything. Budget time for in-dome testing and adjustment. Dark backgrounds work better than bright ones.

-

7

Consider spatial audio. If the venue has a multi-speaker system, explore spatial audio tools — the free IEM Plugin Suite works with any DAW and supports Ambisonics encoding/decoding. Even basic left-right panning in a dome makes a huge difference.

Common Pitfalls

- Resolution expectations. Real-time rendering at 4K×4K domemaster (what large venues need) requires serious GPU power. Start at 2K×2K and optimize.

- Zenith blindness. Artists often focus on the horizon and forget the top of the dome. Always check how your content looks at the zenith.

- Brightness. Multi-projector domes have limited brightness compared to flat screens. Avoid content that relies on bright whites — they'll look washed out. Design for contrast.

- Motion sickness. Fast camera rotations and rolling motions cause nausea in dome environments more than any other display. Keep camera movements slow and deliberate.

- Audio sync. If your visuals are audio-reactive, test latency carefully. A few frames of audio-visual desync can feel deeply wrong in an immersive environment.